Ángel Alex ander Cabrera

What Did My AI Learn? How Data Scientists Make Sense of Model Behavior

Ángel Alexander Cabrera, Marco Tulio Ribeiro, Bongshin Lee, Rob DeLine, Adam Perer, Steven M. Drucker

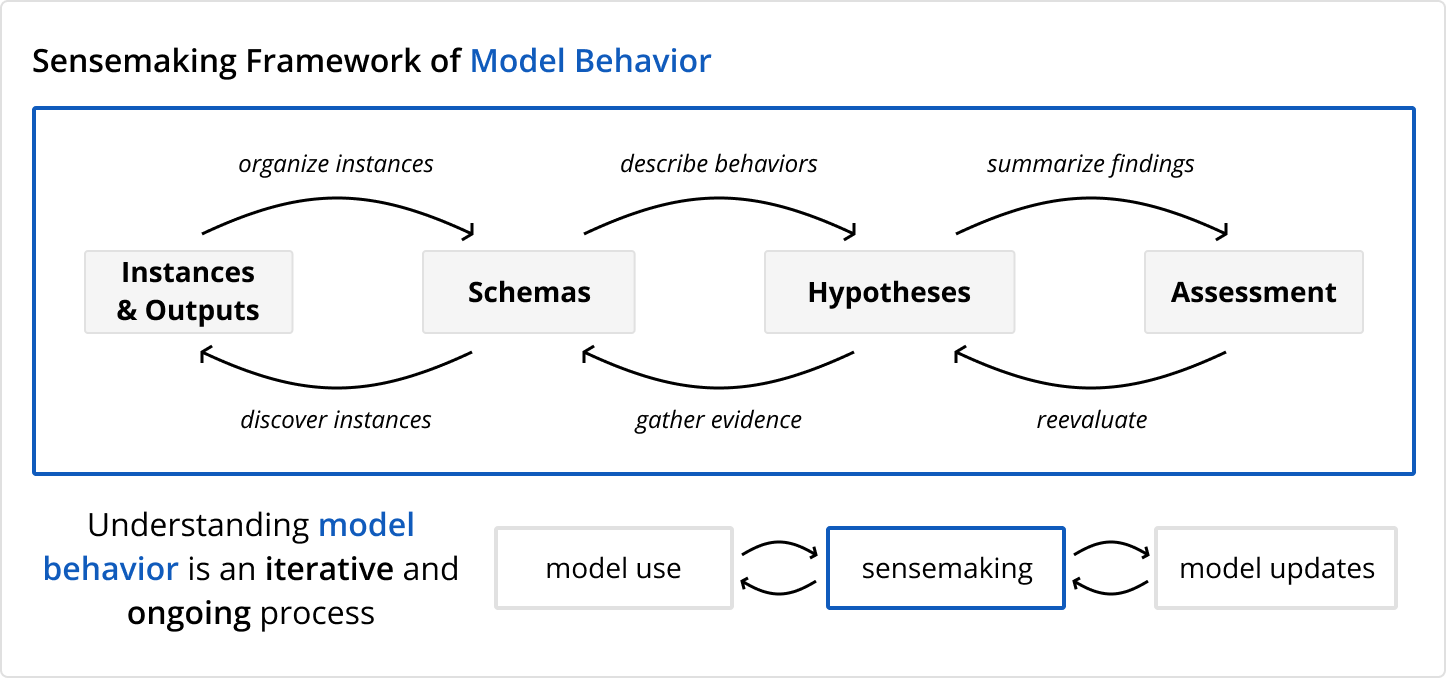

Data scientists require rich mental models of how AI systems behave to effectively train, debug, and work with them. Despite the prevalence of AI analysis tools, there is no general theory describing how people make sense of what their models have learned. We frame this process as a form of sensemaking and derive a framework describing how data scientists develop mental models of AI behavior. To evaluate the framework, we show how existing AI analysis tools fit into this sensemaking process and use it to design AIFinnity, a system for analyzing image-and-text models. Lastly, we explored how data scientists use a tool developed with the framework through a think-aloud study with 10 data scientists tasked with using AIFinnity to pick an image captioning model. We found that AIFinnity's sensemaking workflow reflected participants' mental processes and enabled them to discover and validate diverse AI behaviors.

Citation

What Did My AI Learn? How Data Scientists Make Sense of Model BehaviorÁngel Alexander Cabrera, Marco Tulio Ribeiro, Bongshin Lee, Rob DeLine, Adam Perer, Steven M. Drucker

ACM Transactions on Computer-Human Interaction (TOCHI). 2023.

BibTex

@article{Cabrera2023AIfinnity,

author = {Cabrera, \'{A}ngel Alexander and Tulio Ribeiro, Marco and Lee, Bongshin and Deline, Robert and Perer, Adam and Drucker, Steven M.},

title = {What Did My AI Learn? How Data Scientists Make Sense of Model Behavior},

year = {2023},

issue_date = {February 2023},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

volume = {30},

number = {1},

issn = {1073-0516},

url = {https://doi.org/10.1145/3542921},

doi = {10.1145/3542921},

journal = {ACM Trans. Comput.-Hum. Interact.},

month = {mar},

articleno = {1},

numpages = {27},

}